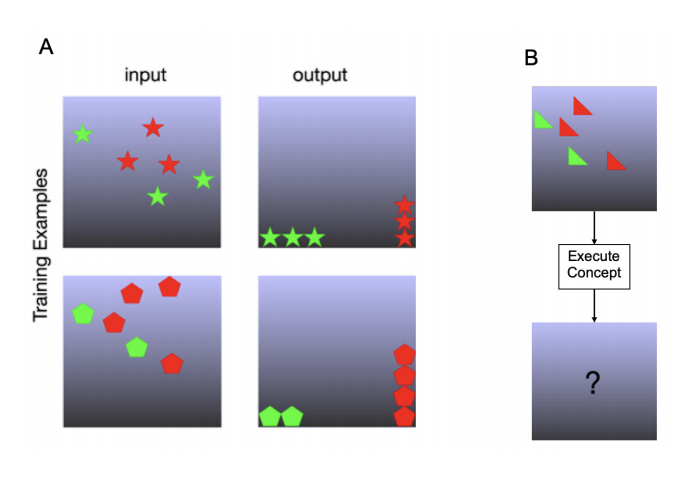

A Model of Fast Concept Inference with Object-Factorized Cognitive Programs

Abstract

The ability of humans to quickly identify general concepts from a handful of images has proven difficult to emulate with robots. Recently, a computer architecture was developed that allows robots to mimic some aspects of this human ability by modeling concepts as cognitive programs using an instruction set of primitive cognitive functions. This allowed a robot to emulate human imagination by simulating candidate programs in a world model before generalizing to the physical world. However, this model used a naive search algorithm that required 30 minutes to discover a single concept, and became intractable for programs with more than 20 instructions. To circumvent this bottleneck, we present an algorithm that emulates the human cognitive heuristics of object factorization and sub-goaling, allowing human-level inference speed, improving accuracy, and making the output more explainable.